Running an exam prep or credential program comes with pressure. Learners expect to pass, your industry expects credibility, and in many cases, regulators expect compliance. A single weak link can affect outcomes, reputation, and long-term growth.

That pressure becomes clear the moment you evaluate your technology.

Many providers start with a general course platform that handles lessons and basic quizzes well enough at first. It looks clean and feels simple. As enrollment grows and exam standards tighten, though, limitations surface. Practice exams feel constrained. Reporting lacks depth. Tracking performance across exam domains becomes manual and time-consuming.

Other teams go in the opposite direction. They invest in proctoring systems, credential platforms, analytics dashboards, and custom integrations before their program structure is fully defined. The result is a stack that feels heavy, expensive, and difficult to manage.

Most certification providers eventually ask the same questions:

Are we missing something critical?

Are we overbuilding?

Is our LMS enough?

These decisions shape learner outcomes, reporting clarity, instructor workload, and how smoothly your program scales.

Exam prep programs require more than content delivery. They depend on aligned assessments, visible performance trends, formal credential tracking, and operational control working together. This guide breaks the exam prep tech stack into functional layers so you can evaluate what each one contributes and make informed decisions about where to invest.

Skip ahead:

- Why exam prep requires a specialized tech stack

- How the exam prep tech stack fits together

- Common mistakes certification providers make

- How to tell if your current stack is underpowered

- Choosing the right stack for your stage

- Build for readiness, not complexity

- FAQs

Why exam prep requires a specialized tech stack

Exam prep operates under higher stakes than most online learning models.

Learners enroll to pass a defined exam, and organizations run these certifications to protect credibility, maintain pass rates, and meet compliance requirements. That focus shapes how the underlying systems need to function.

Most credential-driven programs follow a formal blueprint, which means content must align directly to tested domains and progress must reflect performance within those domains, not just lesson completion. Learners revisit material, retake assessments, and focus on weak areas across the exam cycle. That kind of repeated practice only works if they can see where they actually stand within each tested domain.

Credential integrity adds another layer. Some providers issue certificates of completion, while others grant formal certifications tied to renewal cycles, continuing education requirements, or external verification. Your records need to stand up to scrutiny, especially when regulators or accreditation bodies are involved.

Scale raises the stakes further. Programs serving large cohorts or enterprise clients can’t rely on surface-level metrics because small structural weaknesses grow quickly as enrollment increases.

Certification programs demand more than delivery. Alignment, performance insight, and validation processes that hold up under scrutiny are what separate a credible program from a basic course offering.

Those differences come down to three concrete requirements: content structured around tested domains, continuous visibility into where learners stand within each of those domains, and formal credential validation tied to documented standards. When those requirements are present, your technology needs to support them deliberately. That’s where the layers of an exam prep tech stack come in.

How the exam prep tech stack fits together

The shape of the technology stack follows from the program’s requirements.

An exam prep stack consists of connected systems, each responsible for a distinct stage of the learner lifecycle. Together, they support enrollment, preparation, evaluation, credential issuance, and renewal.

The learning delivery platform anchors the system, organizing content and learner progression. It plays a central role but doesn’t cover every operational or compliance requirement.

Most credential-driven programs rely on five core layers:

- Learning delivery: Course structure, progression, and the exam blueprint

- Assessment: Practice exams, question banks, and readiness measurement

- Analytics: Domain-level performance data and program visibility

- Credentialing: Certificate generation, verification, and renewal tracking

- Operations and communication: Payments, access control, and learner guidance

For most providers, learning delivery, assessment, and reporting form the core foundation. Credential validation and proctoring become more critical as the stakes, regulations, and external accountability increase. Distinguishing between foundational and conditional layers helps prevent unnecessary tooling early on.

Each layer solves a specific problem. Not every provider requires deep tooling across all five areas. Stack decisions should reflect program maturity, enrollment volume, and regulatory expectations rather than ambition alone.

1. Learning delivery platform: the foundation

Every exam-based program begins with a learning delivery platform.

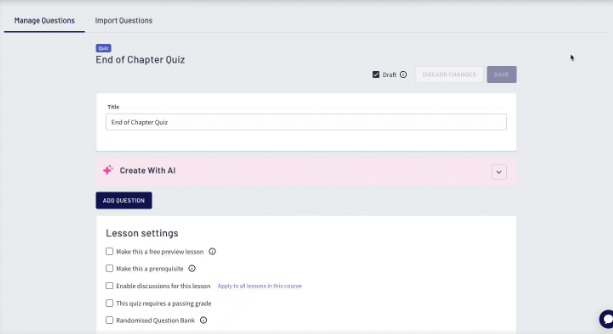

This system houses content, defines progression, and tracks learner activity against the exam blueprint.

Alignment is critical. If your credential tests five domains, your course architecture should mirror those domains so learners can see progress in each domain.

Modular organization supports that alignment. Chapters correspond to exam domains, lessons build from core instruction toward applied practice, and assessments appear at planned checkpoints rather than only at the end. That structure mirrors how the actual exam tests knowledge — progressively and by area.

Mixed formats strengthen preparation. Instruction, knowledge checks, simulations, and cumulative exams should exist within one coherent environment instead of scattered tools.

Learner experience shapes perception of credibility. Clear navigation and visible progress indicators reinforce the seriousness of the credential.

Scalability also influences platform selection. Enrollment growth affects reporting, administrative workflows, and learner access. The system must support increasing volume without creating operational strain.

When evaluating a platform, consider:

- Does the structure reflect our exam blueprint?

- Can learners move logically from instruction to simulation?

- Can we review performance across the tested areas?

- Will this system support projected enrollment growth?

A provider offering a four-domain financial credential might organize the program into four primary modules aligned to the official blueprint. Each module concludes with an assessment tied to that tested area. Completing all modules unlocks a cumulative practice exam that draws randomized questions from the blueprint. Reporting highlights strengths and weaknesses within each tested domain, guiding the study’s focus.

Learner experience deserves the same attention as architecture. In exam prep, that means clear domain-level progress indicators so learners can see exactly where they stand, not just whether they’ve completed lessons. It means accessible retake workflows that don’t create friction when someone needs another attempt. And it means a consistent environment where instruction, practice, and assessment feel like one program rather than separate tools stitched together. Credibility starts with how the program feels to the person preparing for the exam.

Integration matters here too. The learning platform doesn’t operate in isolation. Assessment engines, performance data, and credentialing systems all need to connect back to it, so evaluating integration flexibility at the platform selection stage prevents disconnected tools later.

A platform like Thinkific provides a scalable learning environment that supports blueprint-aligned structure and integrated assessment workflows. You can expand into additional layers as the program’s demands evolve, while keeping the learning system stable at the core.

2. Assessment and practice exams

Assessment determines whether candidates are prepared to attempt the formal exam.

Lesson-level quizzes capture recall. Credential programs often require deeper competency validation under realistic constraints. Learners must apply knowledge within time limits and identify gaps across tested domains.

Question flexibility allows for closer alignment with the exam structure. Multiple-choice may form the base, while scenario-based or calculation-driven items introduce applied complexity.

Timed simulations replicate pacing expectations and expose endurance gaps that simple quizzes can’t surface.

Randomized question banks prevent familiarity from distorting results, varying question order and selection across attempts, so performance reflects understanding, not pattern recognition.

Per-domain performance insight elevates assessment quality. An overall score provides limited direction. A breakdown across tested areas identifies where targeted review is required.

When selecting tools, evaluate where this functionality lives. Built-in LMS assessments may meet early-stage needs. Higher-stakes credentials may require specialized engines that support weighted scoring and advanced simulation logic.

Assessment maturity typically progresses through:

- Lesson-level quizzes tied to instruction

- Blueprint-aligned practice exams weighted by tested area

- Full simulations with timing controls, randomized items, and granular analytics

Program standards and regulatory expectations should determine which level is appropriate.

Certification providers must manage version control, retire outdated questions, and update content when the official exam blueprint changes.

As programs mature, clear authoring workflows and documented review processes become essential. Official exam blueprints change, sometimes annually, and when they do, every affected question needs to be reviewed, updated, or retired. Without a structured process for managing those updates, content drifts out of alignment quietly. Learners prepare for domains that no longer carry the same weight, or miss coverage of newly tested areas entirely. Version control and scheduled content reviews aren’t administrative details. They’re what keeps the program credible when the governing body updates its standards.

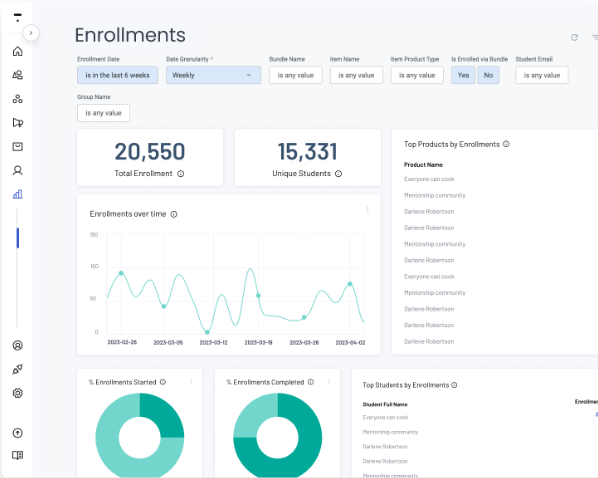

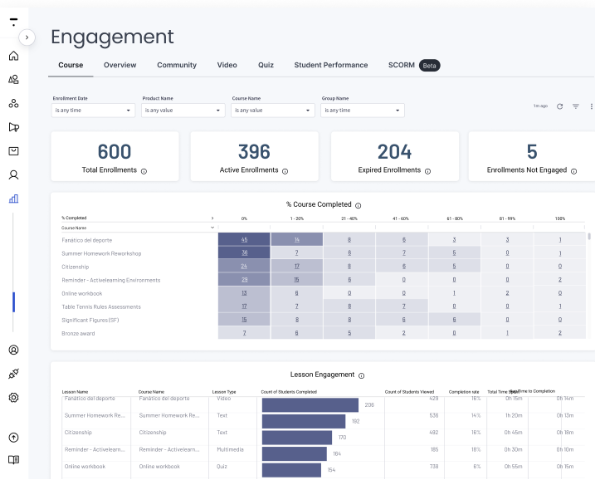

3. Analytics and reporting

Reporting connects learner activity to program decisions, replacing assumption with measurable performance trends across exam domains.

With domain-level performance data, you can see how learners move through the credential framework and where cohorts consistently struggle.

Strong performance data surfaces patterns that completion rates never will. You can see which exam domains are consistently weak across a cohort, where learners slow down or disengage before final simulations, and how different cohort groups compare over time. Time-on-task data adds another dimension — whether learners are rushing through critical modules or stalling in areas that need more instructional support.

That visibility also serves a compliance function. Exportable data gives leadership and accreditation reviewers the documentation they need without a manual reconciliation effort before every audit.

Blueprint-aligned reporting surfaces persistent gaps. If one tested area consistently underperforms, you can adjust content depth or refine question design. Over time, cohort comparisons can reveal shifts that signal outdated question banks or misalignment with exam updates. That’s part of why in-course AI teaching assistants have started showing up in newer LMS platforms: they give learners a way forward the moment they get stuck, instead of letting “I don’t know” quietly turn into “I’m out.”

4. Credentialing and certification management

Issuing a document at program completion doesn’t automatically mean you operate a formal certification.

Lower-stakes programs may rely on built-in certificate generation tied to completion rules. Credentials with external recognition carry added responsibility, including verification workflows for employers or governing bodies and expiration tracking when renewal is required.

Regulated industries often require accurate historical records for audits or compliance reviews. Accredited programs also need documented assessment controls, established review procedures, and clear data retention policies.

As accountability increases, the systems tracking and issuing credentials need to handle verification requests, renewal cycles, and a consistent record history that holds up under scrutiny.

Certificates and certifications aren’t interchangeable. The distinction shapes what your systems need to handle:

Certificate vs certification

Certificate of completion:

- Completion-based recognition

- No public verification requirement

- No expiration cycle

- Limited regulatory oversight

Formal certification:

- Competency-based validation

- Verification accessible to external stakeholders

- Defined renewal timeline

- Continuing education requirements

- Potential regulatory documentation obligations

Proctoring and exam security

When credential validation carries regulatory weight, exam integrity becomes part of the conversation.

Identity verification confirms that the registered learner completes the assessment. Environment monitoring tools may restrict browser activity or record sessions when required by standards.

Licensure requirements, regulatory bodies, or governing associations often determine whether proctoring is necessary.

You are more likely to need proctoring when:

- The exam affects employment or licensure

- The program operates within a regulated industry

- An external authority mandates defined security standards

Lower-risk knowledge validation programs may not require this level of oversight.

Evaluate proctoring based on exam stakes and regulatory exposure. Introduce it when standards call for formal controls. Where risk is limited, keep the stack lean.

5. Operations and communication

The operations and communication layer covers learner guidance, revenue workflows, and internal governance. These systems don’t deliver instruction, but they directly affect whether learners complete the certification and whether the business runs cleanly.

Communication and learner support

Learners disengage when expectations are unclear or progress stalls, especially in longer exam-prep programs.

Automated reminders reinforce pacing, and progress-triggered notifications direct learners toward the next milestone at the right moment. Cohort updates can maintain accountability and shared momentum.

Support channels also shape completion outcomes. When learners encounter technical or content-related questions, clear help workflows reduce friction and keep preparation on track.

A pre-exam sequence might begin two weeks before a cumulative simulation. The first message reviews blueprint domains. A follow-up highlights practice recommendations based on prior performance. A final reminder outlines timing expectations and preparation steps.

Performance-triggered guidance takes it a step further. If a learner underperforms in a specific domain, the system can route them toward targeted review materials and additional practice.

Email Automation and defined learning pathways formalize these workflows so guidance stays consistent rather than reactive.

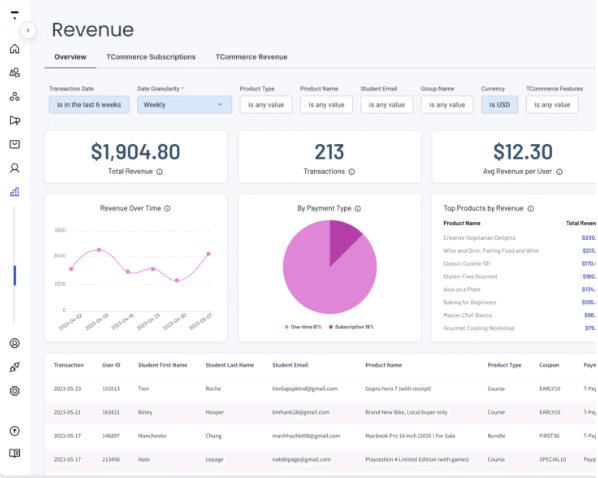

Administrative operations and revenue control

Credential-driven programs operate within defined revenue and governance structures.

Payment systems support one-time exam prep purchases, installment plans, and renewal access for recertification cycles. Enterprise clients often purchase seats in bulk for employee cohorts, which requires efficient seat assignment and oversight.

Role-based permissions clarify responsibilities across instructors, program managers, and support teams.

Tiered access models differentiate between base exam prep, additional simulations, and retake allowances. Access rules should clearly reflect those distinctions.

Governance structures tend to be an afterthought in curriculum design. That’s usually when the friction starts.

Integration and data flow considerations

Integration connects the other five layers. Without it, each system operates in isolation and the toolset loses coherence. Every layer generates learner data, and when those systems don’t communicate, performance tracking, credential status, and reporting scatter across tools. That makes leadership reviews and compliance audits harder than they need to be.

Performance results should directly connect to credential issuance, so status updates transfer automatically once requirements are met.

How openly your systems share data determines how easily they work together. Assessment engines, credentialing platforms, and reporting dashboards should connect without custom development or manual workarounds.

Use this checklist when evaluating integrations:

- Does data sync automatically, or does it require manual export?

- Where does the source of truth for credential validity reside?

- Can reporting aggregate across systems without significant reconciliation effort?

Connected systems reduce administrative burden and make audit preparation straightforward rather than reactive.

In most credential-driven programs, the learning platform acts as the operational center of the stack. Learner progress and assessment outcomes originate there, while credential status serves as the formal validation layer. The clearer your source of truth, the easier it becomes to defend your program during audits or compliance reviews.

Common mistakes certification providers make

Most stack problems aren’t random. They follow predictable patterns.

Over-investing before curriculum alignment stabilizes often leads to misalignment. Sophisticated tools can’t compensate for unclear blueprint mapping or weak assessment logic.

Feature-driven decisions also create friction. Tools should support defined workflows rather than dictate them.

Reporting gaps often remain hidden until performance declines. Completion rates alone rarely reveal weaknesses across specific exam domains.

Scattered learner experiences erode confidence. When instruction, practice exams, and credential verification live in disconnected systems, the program feels unreliable, regardless of content quality.

Treating the LMS as the entire stack is the most common ceiling certification programs hit. The platform is the foundation, not the finish line.

How to tell if your current stack is underpowered

Knowing the patterns is one thing. Recognizing them in your own stack is another. These are the signs your current technology infrastructure isn’t keeping up.

Your stack has a problem if:

- Domain performance tracking requires manual spreadsheets

- Practice exams can’t replicate timing, weighting, or realistic exam conditions

- Credential renewals require manual reminders or tracking

- Leadership requests reports that can’t be generated without data exports and reconciliation

When core workflows depend on workarounds, the system is limiting growth instead of supporting it.

Choosing the right stack for your stage

Technology decisions look different depending on where your program stands.

For most programs, buying an integrated platform delivers more value than building custom infrastructure. Custom builds introduce maintenance overhead, require dedicated technical resources, and create fragility when exam blueprints or compliance requirements change. The exception is mature providers with highly specific regulatory requirements that no available platform can meet — and even then, a hybrid approach using an established LMS as the foundation with custom layers on top typically outperforms a full custom build.

A new credential offering doesn’t require the same infrastructure as an established certification serving thousands of learners. The right starting point is the simplest stack that supports blueprint alignment, assessment, and basic reporting — then expand from there as program demands make the case for additional tooling. An LMS is the necessary starting point, but for high-stakes certifications it’s rarely sufficient on its own.

When the budget is constrained, prioritize investments that directly influence preparedness and reporting visibility. A strong learning foundation, paired with meaningful assessment, typically delivers the greatest impact early on. Reporting depth becomes the next priority once leadership or regulatory stakeholders request defensible performance data.

In practice, most programs layer tools in this order:

- Start with learning delivery — structure content around the exam blueprint

- Add assessment depth — practice exams, timing controls, question banks

- Build reporting visibility — domain-level performance data and cohort tracking

- Layer in credential validation as external accountability increases

- Add proctoring when regulatory requirements or exam stakes demand it

Early-stage providers: simplicity and speed

Early programs benefit from focus and flexibility.

At this stage, the priority is validating curriculum alignment, refining assessments, and observing learner performance patterns. A strong learning delivery platform with built-in assessments often supports these needs.

Start with clear blueprint alignment inside your LMS. Use integrated quizzes and practice exams to gather performance data and refine the program based on real learner behavior.

Adding proctoring systems or external credential platforms too early creates unnecessary complexity and pulls focus from improving core instructional design.

Growing programs: flexibility and reporting

As enrollment expands, reporting demands increase.

Leadership starts requesting visibility into performance across cohorts. Corporate clients want exam readiness summaries.

At this stage, deeper analytics and enhanced simulation logic often become necessary. How credentials are tracked and issued may also need refinement as renewal cycles and verification requests increase.

Growth introduces coordination demands that siloed tools make worse. Systems at this stage should consolidate, not multiply.

Mature providers: scalability and integration depth

Established certification offerings often operate in regulated environments or serve enterprise clients.

Formal credential management becomes essential, and verification workflows must operate consistently at scale. Expiration tracking and renewal processing require reliable automation.

Proctoring becomes mandatory when credentials connect directly to licensure or employment eligibility.

Integration depth becomes the priority as systems multiply. Performance data needs to connect to credentialing systems, reporting dashboards, and external compliance tools without manual reconciliation at every step.

Stack decisions should evolve alongside the program. Adding too much infrastructure too early strains resources. Waiting too long on necessary upgrades limits growth just as much.

Maturity shifts are usually triggered by enrollment growth, regulatory requirements, or reporting demands that current systems can’t meet.

Build for readiness, not complexity

The pressure that opens this conversation — pass rates, credibility, compliance — doesn’t go away when you pick a platform. It goes away when your systems actually support the program you’re running.

Weak stacks rarely fail immediately. Visibility narrows. Adjustments slow down. Pass rates drift. When the impact becomes obvious, it has already reached learner outcomes and stakeholder trust.

Start with a learning environment structured around your exam blueprint and connected to domain-level performance data. Add assessment depth, credential tracking, and operational tooling as your program grows into them. Keep the stack lean until the program demands more.

Thinkific gives certification providers a scalable platform to structure content by exam domain, run timed assessments, and track learner performance at the domain level — without building more infrastructure than the program needs right now. Review Thinkific pricing to find the right plan, or start a free trial and build your first domain-aligned module to see how the structure holds up in practice.

FAQs

What is a certification technology stack?

A certification technology stack is the set of connected tools and platforms a certification provider uses to deliver, assess, credential, and manage exam prep programs. A well-built certification technology stack typically includes a learning delivery platform, assessment and practice exam tools, analytics and reporting, credentialing systems, and operational infrastructure for payments and learner communication. The specific tools in a certification technology stack will vary depending on program maturity, enrollment volume, and regulatory requirements.

What tools do exam prep providers need?

The tools exam prep providers need depend on program stage and exam stakes, but most certification offerings require at least three core components: a learning delivery platform structured around the exam blueprint, assessment tools that support timed practice exams and domain-level performance tracking, and reporting that surfaces where learners need targeted review. More established exam prep providers also need credentialing systems to handle certificate generation and renewal tracking, and automated communication tools to keep learners on pace between milestones.

What should I look for in an LMS for exam prep and certification?

An LMS for exam prep and certification should support course structures that mirror the exam blueprint, with content organized by tested domain rather than topic alone. The right LMS for certification programs also needs integrated assessment capabilities, domain-level performance reporting, and scalability to handle growing learner volumes without creating administrative burden. Look for a platform that supports mixed content formats — including practice exams and knowledge checks — and connects cleanly to any external credentialing or proctoring tools your program depends on.

What online exam prep tools are most important for scaling a certification program?

When scaling a certification program, the most important online exam prep tools are those that reduce manual work while improving performance visibility. Start with a learning platform that has blueprint-aligned course structure and built-in assessment. From there, scaling certification programs typically need deeper analytics to track domain-level performance across cohorts, credentialing infrastructure to handle verification and renewal, and automated communication tools to guide learners through longer preparation cycles. The right online exam prep tools at scale are ones that connect to each other cleanly — disconnected systems create reporting gaps and administrative overhead that compound as enrollment grows.